This is the 4th Hotfix in the yarnd v0.7.x series just due to my sheer tiredness and my own limitations (I’m not perfect 😅). Sorry for the noise! Please upgrade to this hotfix when you can! cheers! 🙇♂️

As per usual, happy Yarn’ing and please send all feedback to @prologic@twtxt.net or reply to this Twt 🤗

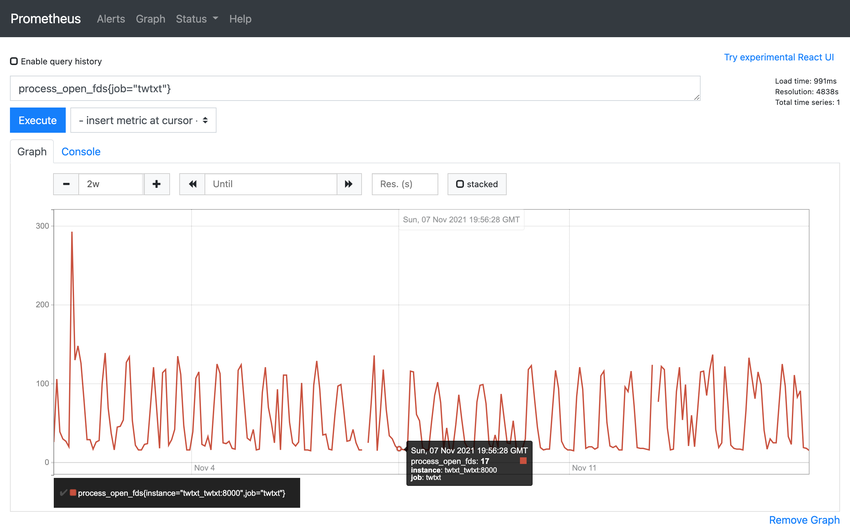

Woke to find that yarnd wasn’t responding; “too many open files” errors in the log. Just updated.

@jlj@twt.nfld.uk Oh? 🤔 That’s a bit odd! 😳 I hope there isn’t an fd leak somewhere in the code 😥

I’ll have a poke around my pods monitoring…

Seems OK now… Started around 4am my time, I guess – relevant syslog extract – presumably related to the upgrade I’d performed… about five hours previously.

@jlj@twt.nfld.uk Didn’t see clues in that syslog extract hmmm

@jlj@twt.nfld.uk Hmmm haven’t seen any “fd leak” in my own pod in the past ~2weeks either. 🤔 I can’t explain what happened to your pod without more data 😢

Fair enough. Any of these settings jump out as a problem?

root@royale:~# cat /proc/21462/limits

Limit Soft Limit Hard Limit Units

Max cpu time unlimited unlimited seconds

Max file size unlimited unlimited bytes

Max data size unlimited unlimited bytes

Max stack size 8388608 unlimited bytes

Max core file size 0 unlimited bytes

Max resident set unlimited unlimited bytes

Max processes 1376 1376 processes

Max open files 1024 524288 files

Max locked memory 65536 65536 bytes

Max address space unlimited unlimited bytes

Max file locks unlimited unlimited locks

Max pending signals 1376 1376 signals

Max msgqueue size 819200 819200 bytes

Max nice priority 0 0

Max realtime priority 0 0

Max realtime timeout unlimited unlimited us

That’s the main yarnd process on my system currently, btw. Maybe I should try raising that soft limit on open files. That max core file size soft limit looks a bit worrying as well; not sure what that refers to, mind.

@jlj@twt.nfld.uk You might want to increase max open files a bit but other than that looks okay 👌